Researchers at the Exertion Games Lab of Monash University have created a novel AI-driven AR system to enhance human’s ability to communicate emotions.

“Neo-Noumena” is a neuroresponsive system that uses brain-computer interfacing (BCI), augmented reality (AR), and artificial intelligence (AI) to read a user’s emotional states and then dynamically represent those emotions to others through head-mounted displays (HMDs).

The OpenBCI Cyton and EEG cap were used to collect EEG data from Neo-Noumena users. Features from the filtered and processed EEG data are then interpreted by a support vector machine classifier to infer the user’s emotional state. Dynamic fractal shapes representative of each user’s emotional state are generated and projected into mixed reality as an “aura” around the user. The Hololens AR headsets allow users to see their own emotional auras, as well as those of other Neo-Noumena users.

OpenBCI spoke with Neo-Noumena creator Nathan Semertzidis about the project, and his motivations for building tools that augment our ability to communicate emotions.

Q: Where did the idea for this work come from? What made you think to focus on “seeing” emotions?

NS: The focus of my PhD is to understand how we can use brain-computer interfaces to share subjective experiences of consciousness. This is a concept I have come to call “Integrated Consciousness”. When pushed to its furthest logical and technological extent, this might look like some kind of hive mind or cybernetic collective consciousness in the distant future. With science fiction and speculation aside though, my research investigates how systems can use BCI to interpret affective and cognitive states, and encode these states in a way that can be shared with others.

With Neo-Noumena, we pursued integrated consciousness specifically in the domain of emotion, because emotions are tricky to express interpersonally, leaving room for us to improve it. From the literature, we learned that a lot of frustration behind accurately communicating emotion came from inherent language constraints, wherein a bottleneck in the emotional information bandwidth between people prevents efficient communication. We saw the capabilities of AR as an opportunity to widen this bottleneck by allowing people to see the emotion of others through holographic representation in addition to the visual and audio cues already used in communication. In essence, we were giving our natural means of emotion communication a technological boost.

Q: How would you explain Valence and Arousal to a layperson unfamiliar with those specific terms?

NS: Usually when people ask me what valence and arousal are, I tell them that valence describes how “pleasant” a feeling is, and arousal describes how “energetic” that feeling is.

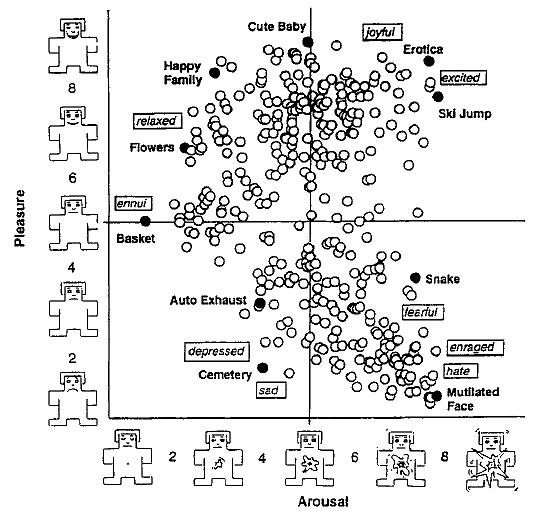

Q: What do you think of this 1995 distribution of images in IAPS as a way to map stimuli/emotions to a 2D valence/arousal grid?

NS: I have mixed feelings about these mappings of emotion. The 1995 Self-assessment manikin mapping of emotion onto a 2D valence/arousal grid is what the DEAP database, the database we used to train Neo-Noumena, used to annotate its emotion samples. This means that Neo-Noumena is functionally based on that mapping. Despite this, I personally feel that these mappings detract from the richness and complexity of emotional experiences. Not because it’s a quantitative translation, but rather because I think there would be a lot more dimensions than two, especially when you consider that the neural mechanisms underlying emotion are very diverse, distributed and varied. I believe that we need to better understand the connections between the phenomenological experiences of emotion, and the physiological processes that drive them, before we can begin to be confident we have an accurate mapping of emotion. Until then though, I think that the 2D valence/arousal grid is currently the most actionable model we have, especially in applications of affective computing.

Q: If you could add a 3rd axis to the model…what would it be?

NS: Rather than add my own 3rd axis I would use one already proposed. Psychological theory of affect includes a 3rd axis of affect called “motivation”, which is in short, the degree to which that feeling promotes action. For example, fear would be high arousal, low valance, and high motivation, in that fear is often paired with fleeing behaviour. Similarly, the DEAP dataset that we used to train Neo-Noumena had a third axis called “dominance”, which is similar to motivation. We ultimately excluded this dimension from Neo-Noumena, as it significantly reduced the accuracy of the model with the setup we had available to us, but would have probably allowed for a classification more true to the users experience of emotion if we had more channels and personalised models in use. Nonetheless, I still believe a new approach is something definitely worth considering.

Q: What were some of your favorite reactions, quotes, or things that stood out in the user interviews?

NS: There were a lot of amusing and uplifting stories told in the user interviews. One of my favorite stories was when one participant was describing how they played a game of 500. I’m not entirely familiar with the rules but I know it’s a card game you play in teams. From what I understand it is highly beneficial to read your teammate effectively to anticipate what cards they are going to play, so you can compliment their play with yours. This participant described how themselves and their teammate used Neo-Noumena to help them deduce whether or not their teammate had a good hand.

Another story I found quite amusing was when two participants described how they were in a room together wearing Neo-Noumena, with one playing guitar whilst the other was studying. The one playing guitar guitar asked if it was ok to play and the participant studying said yes. However when the participant with the guitar did play, the factals produced by the participant studying suggested they were getting frustrated. They both found this amusing during their interview and the participant who was studying was quite adamant that it wasn’t the music but the assignment that was the source of the frustration.

Q: What are you working on next? Neo-Neumena or otherwise…

NS: Right now we’re working on a system called PsiNet. It continues on from Neo-Noumena in that it’s a system intended to help share subjective experiences of consciousness. Where PsiNet differs however is that we’re moving away from augmented reality and instead looking to neurostimulation, specifically transcranial electrical stimulation. This was decided on to move away from relying on symbolic representations as a means to communicate experience, instead exploring the idea of sharing the experience itself. We’re also hoping to expand our EEG readings to include more cognitive information such as the interpretation of cognitive load in addition to affective classification. In practice, the system will be reading affective and cognitive information in one user, and then stimulating neural populations in another user to evoke an approximation of that (albeit very ambiguously). For our upcoming study of PsiNet we’re also expanding user groups from two to five. Ultimately, we intend that through this work, we can enter a future of widespread use of BCI technologies, already armed with knowledge on how these technologies interact with consciousness, and the design praxis to facilitate a unity between all minds.

For more information on Neo-Noumena and the Exertion Games Lab, visit their project page.

You can also watch Nathan’s video presentation of the work as part of CHI 2020.

Leave a Reply

You must be logged in to post a comment.