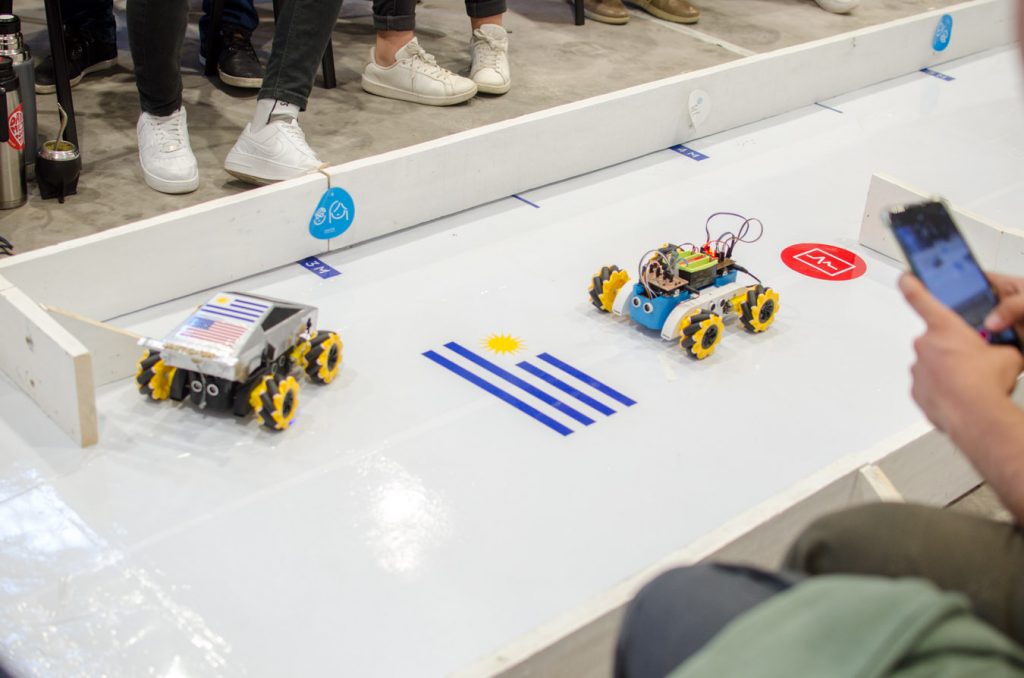

On December 17, 2021, the first BCI controlled robotic vehicle race was held in Uruguay.

For this project students of Biomedical Engineering and Mechatronic Engineering careers from Universidad Tecnológica del Uruguay (UTEC) forming teams, worked for ten months in the design and implementation of a BCI in order to control robotic vehicles using their brain signals.

Introduction

The BCI was divided into three main modules. In the first stage the teams were divided in subgroups in order to work over the modules in parallel. Then, in a second stage the three modules were joined in one in order to make the whole BCI.

Briefly, the modules had the following functionalities.

Module 1

- Visual stimulus generation in order to generate in the brain occipital lobe (where is the visual cortex) Steady State Visual Evoked Potentials (SSVEPs). Why SSVEPS? Because these potentials are the easiest to evoke -I think they are- and at the same time, they are easy to process from EEG signals.

- EEG signal acquisition, preprocessing, feature extraction and classification of EEG signal.

Module 2

- Responsible for Wireless communication -the students used bluetooth- between Module 1 and 3.

Module 3

- Vehicle control using the commands classified and sent from the Module 1.

- Obstacle monitoring.

Materials and Methods

The EEG signal acquisiton was made using the Ganglion Board and the Electrode Cap using the O1 and O2 channels. The digitalized data from Ganglion was sent to a computer with Python 3.9 -running on Windows 10-. We used Brainflow library in order to get the raw data coming from the Ganglion board.

The preprocessing was used to eliminate noise components and bandwidth limitation. Then, feature extraction algorithms were implemented to extract features from the EEG channels. After this, some classifiers were trained (SVM, LDA and CNN) and the best was used to classify the SSVEPs from the processed EEG. For these purposes some libraries used were Numpy, Scipy, Scikit-learn, Matplotlib, Pandas, Pyserial, and more.

The case for the stimulator device and the robotic vehicle were designed using 3D software and then printed using PLA and ABS materials.

On the other hand, the students made electronic boards like “shields” to connect them into Arduino UNO boards. They used Eagle PCB to do this.

Conclusions

This project was very challenging for the students. It is important to mention that none of them had worked and didn’t know anything about BCIs, besides some of them didn’t know anything about signal processing and no one knew anything about Machine Learning algorithms, so, it was 10 months of hard work for all. The students were very, very motivated and they left me very happy with their work. I think they learned a lot!

This project was the first on his kind here in Uruguay -and I think in the region-, and I hope it’s not the last.

The main objective of this hackathon was that students, university professors and medical professionals could form a group of people working together in the investigation, design and development of this kind of assistive technology in UTEC. Only in Uruguay are more than a hundred thousand people with severe situations of disability, and a lot of them are candidates to use a BCI or other kind of assistive technology.

I think this kind of project is very motivating for young people and introduce them in STEM technologies.

For this 2022/2023 we want to make a second competency but this time we want to implement a BCI using Motor Imagery to move robotic wheelchairs. Besides, for this second edition we will invite not only students and professors from UTEC but also from other universities of Uruguay -and in a future, from Argentina and Brazil-

The project was published in lucasbaldezzari/BCIC-Personal. I will update this repo in the next weeks with all the codes, firmware, boards, 3D design, and more.

I hope you liked it.

Below you can see some pics from the race!

Greetings from Urugay.

Lucas Baldezzari

Thanks Chuck!

Great project,

I am doing the same project as you but I want to control RC car kit using eye movements. How do you transfer real-time signals from OpenBCI GUI to Arduino?

Thank you!

Hi there, Ninjee! Thank you for your comment.

About your question. I’m pretty sure that you can send information from OpenBCI boards to Arduino directly, but I never did that, you can looking for some related topics in the OpenBCI forum. However, you can use some framework like Brainflow or OpenBCIPython (https://github.com/openbci-archive/OpenBCI_Python) to get data from the board and then using a python library such pyserial you can communicate your arduino and the board through the computer.

Hope this help you.

Any other question, please let me know.

Good luck!

Lucas

that students, university professors and medical professionals could form a group of people working together in the investigation, design and development of this kind of assistive technology in UTEC. Only in Uruguay are more than a hundred thousand people with severe situations of disability, and a lot of them are candidates to use a BCI or other kind of assistive technology.

https://www.clickercounter.org/

the students. It is important to mention that none of them had worked and didn’t know anything about BCIs, besides some of them didn’t know anything about signal processing and no one knew anything about Machine Learning algorithms, so, it was 10 months of hard work for all. The students were very, very motivated and they left me very happy with their work. I think they learned a lot!This project was the first on his kind here in Uruguay -and I think in the region-, and I hope it’s not the last.The main objective of this hackathon was that students, university professors and medical professionals could form a group of people working together in the investigation, design and development of this kind of assistive technology in UTEC. Only in Uruguay are more than a hundred thousand people with severe situations of disability, and a lot of them are candidates to use a BCI or other kind of assistive technology.

https://www.clickercounter.org/